Meta Incremental Attribution: What It Is, What It Fixes, and Where It Still Falls Short

Meta incremental attribution is better than standard attribution. Here is what it does, what it misses, and what to use instead.

.png)

Meta incremental attribution is Meta's causality-focused attribution model. Instead of crediting conversions to ads based on clicks and view windows, it estimates which conversions actually would not have happened without the ad.

That is a meaningful shift. It is also not the full answer.

For years, platform attribution answered a weak question: did a conversion happen after an ad interaction? Meta's incremental attribution model tries to answer the better one: did the ad actually cause it?

Here is what it does, where it falls short, and how to use it without getting burned.

What Is Meta Incremental Attribution?

It is Meta's attempt to separate conversions your ads caused from conversions your ads simply touched.

Standard attribution gives credit based on click and view windows. If someone saw your ad and bought it within 7 days, that purchase gets credited to the ad, whether or not the ad had anything to do with the decision.

Incremental attribution asks a different question: would this person have bought anyway?

Meta uses machine learning trained on its historical Conversion Lift data to answer that question continuously. It applies holdout-style logic in the background: compare the conversion rate of people exposed to your ad against a modeled control group, then estimate the difference. That difference is your incremental lift.

Meta rolled this out in Ads Manager in April 2025. You can view the data retroactively from that date without switching your campaigns over. That means you can compare it to your current setup right now, without touching anything live.

For a broader look at how true incrementality is defined and measured outside platform walls, Stella's Incrementality page is a useful reference.

What Is the Difference Between Standard and Incremental Attribution?

This is where most of the confusion lives.

Standard attribution optimizes toward conversions inside your chosen window, usually 7-day click and 1-day view. The algorithm finds people likely to convert, which sounds good until you realize it often finds people who are going to buy regardless of your ad. You are paying for credit, not cause.

Incremental attribution optimizes toward conversions it estimates would not have happened without the ad. That shifts delivery toward people who are genuinely on the fence, not people already decided.

One practical consequence most coverage skips: when you switch campaigns to optimize using incremental attribution, reported conversion volume often drops and CPAs often shift. The learning phase resets. This is not a performance problem. It is the algorithm recalibrating toward a stricter definition of what counts. Expect a few weeks of instability before the new optimization signal stabilizes.

A test that proves why this matters: Seer Interactive analyzed over $1.05 million in Meta spend across six accounts in April 2025. Meta's incremental view said 87% of conversions were incremental. A GA4 cross-check put the number at 67%. That 20-point gap is not a rounding error. It is how much standard attribution was overstating performance.

To understand where your own number should fall, real benchmark data from 225 incrementality tests across DTC brands shows a median iROAS of 2.31x, with an IQR of 1.36x to 3.24x and an 88.4% statistical significance rate. If your platform-reported ROAS is sitting meaningfully above that range, your attribution is probably doing some flattering work. The full breakdown is in Stella's 2025 DTC Digital Advertising Incrementality Benchmarks.

What Changed in Meta Attribution in March 2026?

This matters because a lot of teams are reading their dashboards wrong right now.

In March 2026, Meta changed how click-through attribution is defined. Previously, any click on your ad counted as a "click" for attribution purposes: likes, shares, saves, comments, and link clicks. Now, only actual link clicks qualify as click-through conversions.

Everything else moved to a new category called engage-through attribution, which carries a 1-day window instead of 7 days.

If your click-through conversions dropped after this change, your performance probably did not actually drop. Meta reclassified those conversions into engage-through. Total conversions across both categories should be roughly similar to before.

Two things to keep straight:

- This is an attribution definition change. It is separate from incremental attribution.

- Incremental attribution is about how causality is estimated, not about which interaction types count.

Mixing those two things up leads to bad conclusions. Jon Loomer's full breakdown of how Meta attribution works in 2026 is the clearest reference if you want the complete picture on settings, windows, and the new engage-through category.

How Do You Actually Use Incremental Attribution?

You do not need to switch your campaigns over to start learning from it.

In Ads Manager: click the Columns dropdown, select Custom, then click Compare Attribution Settings. Check the Incremental Attribution box under Advanced Options. This adds incremental conversion columns alongside your standard data. Retroactive data is available from April 1, 2025.

Start by looking at the gap between your standard and incremental numbers.

Small gap (under 15%): Your campaigns are probably finding genuinely incremental conversions. Your optimization signal and your causal reality are reasonably aligned.

Large gap (30%+): You are likely over-indexing on audiences that would have converted anyway. That is usually heavy retargeting, branded traffic, or both. The ad is mostly taking credit for demand that already existed.

Use this as a diagnostic first. Understand what the gap is telling you before deciding whether to optimize campaigns using the incremental setting. For brands that want a continuous read on this without manually checking Ads Manager columns, always-on incrementality testing automates that ongoing measurement.

The Three Biggest Limitations

1. It is still Meta measuring Meta

Incremental attribution is scoped entirely to Meta's ecosystem. It cannot tell you what is happening across paid search, email, organic, influencer, TV, or retail. If a customer saw your Meta ad, then searched your brand on Google and converted there, this model does not capture that.

For brands running spend across multiple channels, platform-reported incrementality will always give you a partial picture. That is not a knock on the feature. It is the reality of walled gardens. Every platform's incrementality model has a built-in incentive to look good. Cross-channel budget decisions need a measurement approach that sits outside any single platform, which is where media mix modeling fits into the stack.

2. It is modeled, not measured

There is a meaningful difference between a model that estimates causal lift and an experiment that measures it.

Meta's incremental attribution uses historical lift data and machine learning assumptions. It is directionally useful. It is not the same as running a test with a real holdout group you design and control.

When the decision is big enough to matter, you want the actual experiment. Modeled incrementality is a useful shortcut. It is not a substitute for experimental proof. For a full look at how real incrementality tests are designed and what they tend to find across DTC brands, the Stella incrementality testing guides are worth reading before you set one up.

3. Retargeting looks worse, and that is accurate

If you run a lot of retargeting, incremental attribution will probably show weaker performance than standard attribution. That is not the model malfunctioning. It is the model correctly identifying that people in small retargeting audiences were often going to convert regardless of whether they saw your ad.

Seer's analysis confirmed this directly: broad targeting and hyper-narrow segments like retargeting showed the lowest incremental performance across all accounts tested. The attribution was flattering. The incrementality was not.

This does not mean retargeting is useless. It means you now have a more honest read on how much it is actually contributing.

Comparison: Standard Attribution vs. Incremental Attribution vs. Lift Testing

When Should You Use a Lift Test Instead?

Use a proper lift test when the decision is big enough that being wrong would cost you real money.

Specifically:

- You want to know whether Meta is driving any net-new demand at all, not just taking credit for it

- You are validating a new audience or campaign format before scaling it

- You are making a cross-channel budget decision and need to know Meta's actual contribution

- Your branded or retargeting campaigns show strong attributed ROAS and you suspect they are harvesting organic demand

Incremental attribution is a useful daily signal. Lift tests are what you run when you need to be right.

The practical difference matters: lift tests are manual experiments you design with a defined start date, end date, and holdout group you control. Incremental attribution is Meta's model running continuously on Meta's assumptions. Both use holdout methodology. Only one is yours.

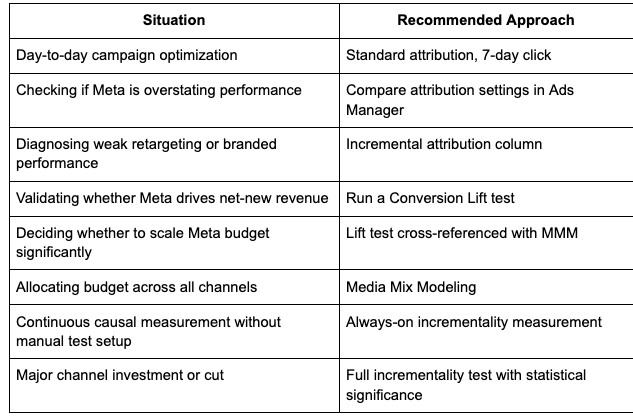

Decision Guide: What to Use When

Why One Tool Is Never Enough

Meta incremental attribution tells you how Meta thinks about what Meta caused. That is useful. It is not the same as knowing what your marketing actually drove across your whole business.

Google's Modern Measurement Playbook describes the right framework: attribution, incrementality experiments, and media mix modeling are not substitutes for each other. They answer different questions. Attribution gives real-time signal at the campaign level. Incrementality gives causal proof for specific decisions. MMM gives you cross-channel budget allocation that no single platform can provide on its own.

Ridge Wallet CMO Connor MacDonald described it well: "Incrementality has become the default measurement. The only rival it has is MTA, but one's a compass and one's a map. You need both."

The full version of that stack is incrementality as the compass, attribution as the map, and MMM as the altitude view. Each one answers a question the others cannot. Use all three and you are making decisions. Use one and you are still guessing, just with a better-looking spreadsheet.

The Bottom Line

Meta incremental attribution is a real improvement. It asks a better question than standard attribution, does it automatically, and requires no test setup to start reading the data.

But it is still Meta measuring Meta. It is still a model, not a measurement. And it tells you nothing about what is happening in your other channels.

Use it. Compare it to your standard numbers. If the gap is large, take that seriously. Then validate what matters most with a proper experiment, and use media mix modeling to make your cross-channel budget calls.

That is the full stack. Anything shorter is guessing with extra steps.

The Natural Next Step

If you have read this far, you already understand that platform-reported incrementality is a starting point, not a finish line.

The brands getting the clearest picture are running actual experiments, not just reading the Ads Manager column. Across 225 incrementality tests on DTC brands, the median iROAS came in at 2.31x. That number only exists because someone ran a real test instead of trusting the dashboard.

Stella is built for that: continuous incrementality testing and MMM for brands that want to know what is actually working, not what Meta says is working. If you want to see what your campaigns look like under a real test, that is where to start.

.png)

.png)

.png)

.png)